Having a home lab is a vital part of self-progression, I have always re-invested in myself, committing to self-study and home learning and it has helped me with career progression, so after my pizza box mistake I was in need of some toys to play with to continue that progression.

I looked into a number of options including Supermicro servers, HP Microservers and even building my own hosts in micro ATX cases but ultimately I don’t think anything gives you the same bang for their buck (or £ in my case) as the Intel NUC

The Intel nuc is the “official” unofficial VMware home lab!! There are posts all over the tinterweb from vSuperstars about why these bite size comps are ideal for a home lab, the downside being that they are not that cheap.

My BOM (bill of materials) doesn’t really differ from anyone else’s however if you’ve managed to stumble your way to my blog first, it goes like this

- 3 x 5GB USB 3.0 thumb drives (To install ESXi on)

That lot cost me over £2400 (don’t tell the misses!!)

The above will build you a 3 Node all flash vSAN cluster (you will need a switch)

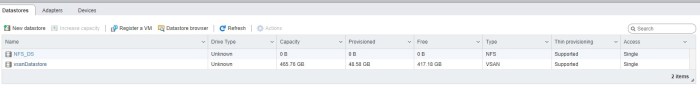

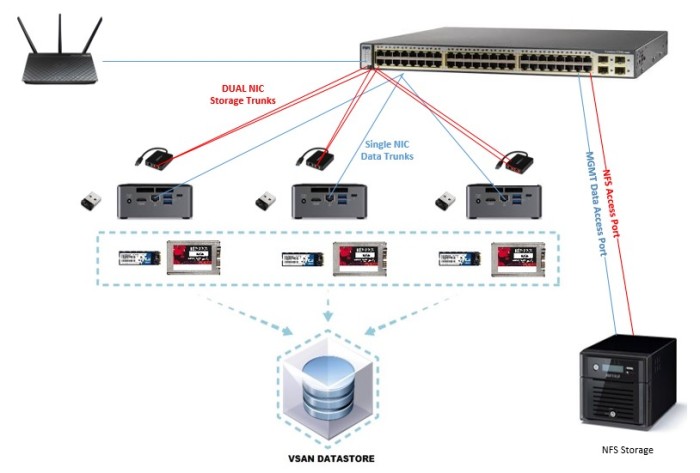

The only thing that probably differs in my Lab to others who have chosen NUCs is that I’m using an M.2 for the capacity tier and an SSD HD for the caching tier. The only reason for this was I still had the 60gb SSDs from my pizza box mistake so I put them to good use!

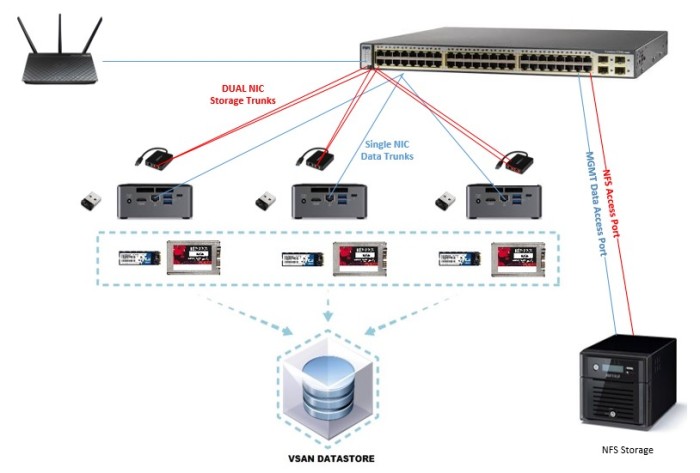

The NUC only has one on board NIC so the USB to Dual port Gigabit adapter will give me three NICs per NUC (try saying that fast with a mouth full of Doritos!)

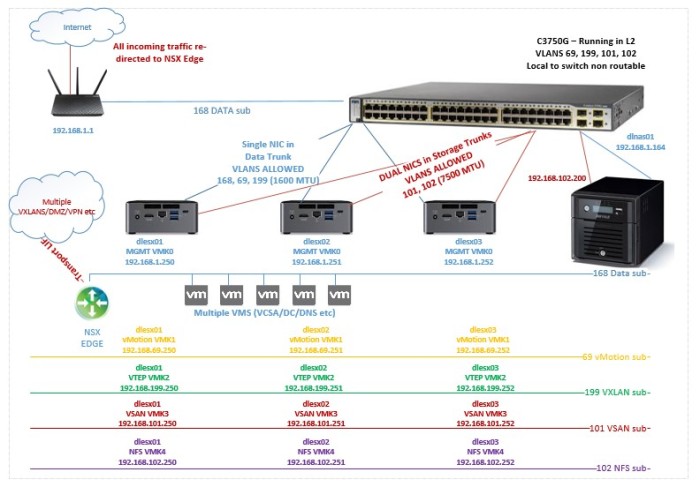

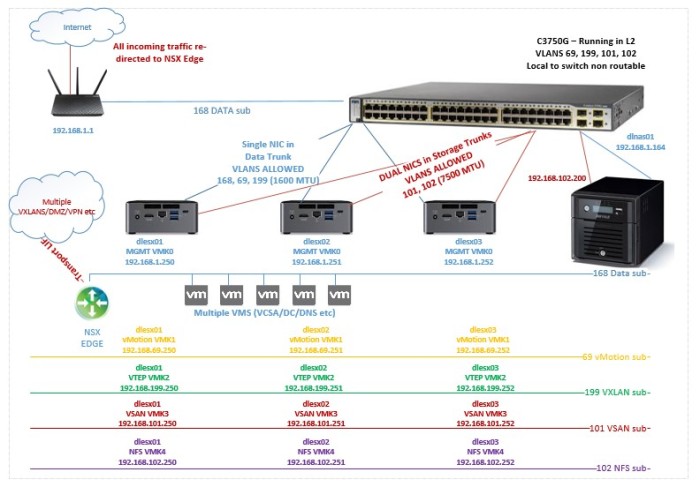

Two NICs for vSAN and NFS traffic my “Storage NICS” and 1 for everything else (vMotion, Data, MGMT)

I already have a Buffalo Terastation and a Cisco 3750, which I used to complete the lab setup, the 3750 is used as layer 2 only, I created non-routable subnets for vMotion, vSAN, NFS & VXLAN (NSX is in the lab, more on that later)

Since all devices are patched into the same 3750 switch, I didn’t have to worry about routing those subs outside the switch but carving them up in their own VLAN helps limit broadcast traffic.

Mgmt and VM data sit on the same subnet, it’s not ideal… but it’s a lab.

The Terastation is used to house ISO\OVAs and maybe eventually the occasional low I/O VM, it has two NICs. One NIC is on the unrouteable NFS sub. The other NIC is on my mgmt.\data subnet so I can manage it!

All NUC NICS (USB & ONBOARD) support an MTU of more than 1600, which means I can have NSX in my lab, however unfortunately they don’t quiet support Jumbo MTU, again it’s a lab but in real world scenarios VSAN, NFS and probably vMotion too should be across a JUMBO frame enabled NIC. vSAN should also be backed ideally by a 10gb SWITCH when using all flash, but the 1GB Switch in my case works just fine… it’s a lab…just tell yourself “I wont buy a 10gb switch… I don’t need a 10gb switch…”

So “back of a fag packet” logical & physical designs…

Physical

Logical

There are a few things to consider when building the NUC lab.

- Some versions of ESXi don’t support the NUC/USB NICS so you’ll need to create a custom ISO image with the required VIBs. That MAY have changed with versions later than 6.5 u1 (I installed 6.5 U1) I’ve not kept in the loop with what does and doesn’t work. William Lams blog or virten.net maybe able to help you on that front.

My details to create a custom ISO for 6.5 U1 can be found here

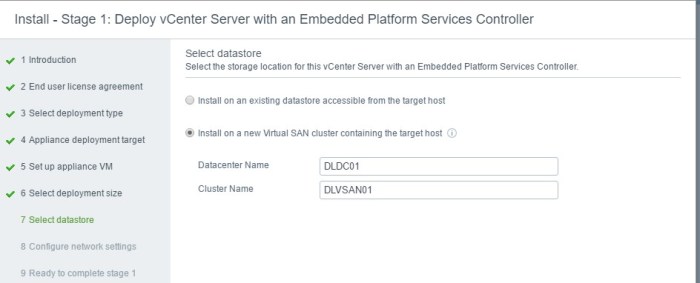

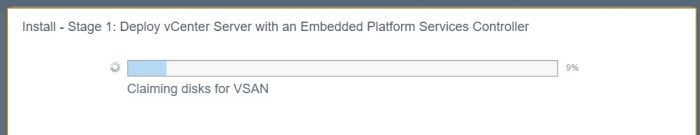

- If you’re planning to use VSAN as your sole storage resource, then you’ll need to “bootstrap” VSAN to deploy your vCenter, as VSAN configuration is predominately done in vCenter and since you cant deploy a vCenter without storage, you get stuck in a chicken and egg scenario. William LAM has some great tech blogs on how to do this manually, but since vCenter 6.5 u1 there’s an option within the VCSA deployment gui to configure VSAN as you deploy the VCSA. I’ve briefly blogged about that here.

The LAB performs really well, I’ll throw up a bench marking blog at some point, but for now I hope you found the above useful!