This blog post is attempting to collate all the details on the platform services controller

First and foremost, let’s call out some things you can’t do, so you don’t read this whole blog only to find out your use case isn’t supported…

WHAT ISNT SUPPORTED

- You CANT merge vSphere domains in vSphere 6.0, that is, if you have 2 vCenters with embedded (or external) PSCs that were deployed independently of one another, whether you used custom domain names or the default “vSphere.local” domain they can’t be merged into a single vSphere domain. [ref1]

- You CANT migrate a vCenter server from one vSphere domain to another [ref1]

- You CANT use enhanced linked mode between two separate vcenters in separate Domains, as enhanced link mode requires all PSCS to be in the same domain. [ref1]

- Snapshots, this is a contentious one, if a PSC is replicating to other PSCs within the same site or cross sites then rolling back to previous snapshots are not supported as they can result in a PSC being out of sync with its sibling PSCs, this also applies to image level backups. This does not apply to standalone PSCs. [ref1] [ref6] [ref7]

WHAT IS SUPPORTED

- You can migrate from an embedded PSC to an external PSC. There are some considerations when converting; certificates, integrated applications such as SRM, vRO, vRA need reconfiguring/repointing at the new PSC. When you migrate to an external PSC you use the cmsso-util reconfigure command rather than the smsso-util repoint command using “reconfigure” de-comms the embedded PSC. [ref2]

- You can repoint a vCenter server to another PSC within the same site, providing of course that the PSC is in the same vSphere domain. [ref3]

- You CAN repoint a vCenter server in SSO site 1 to a PSC in SSO site 2, providing of course that both sites are a member of the same vSphere domain! HOWEVER the pre-req for this is that vCenter Server is running 6.0 update 1 or later. THIS FUNCTION HAS SINCE BEEN REMOVED IN VSPHERE 6.5 so this is only supported in versions 0u1 – 6.5. Instructions on how to do this can be found in the references below. [ref4] In 6.5 or 6.0 before u1, if no functional PSC instance is available in the same site as the vCenter, then you must deploy or install a new PSC instance in this site as a replication partner of a functional PSC instance from another site.

PSC Maximums

There’s a great blog post by leading vCommunity legend William Lam on the PSC maximums in 6.5

http://www.virtuallyghetto.com/tag/platform-service-controller

Those numbers vary slightly from those in 6.0

|

vSphere 6.0 [ref7] |

vSphere 6.5 [ref8] |

| Maximum PSCS per vSphere Domain |

8 |

10 |

| Maximum Linked VCs |

10 |

10 |

| Maximum PSCS per site behind Load Balancer |

4 |

4 |

Musing/Question – I’ve struggled to find the maximum number of sites within a vSphere Domain listed in any VMware documented, I’ll update this blog if I find anything conclusive. Assuming at this point it’s limited by the number of PSCs allowed in a vSphere Domain

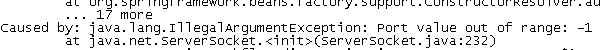

PSC Replication Topologies

When considering a PSC design, there are six high level PSC topologies VMware recommend:

- vCenter Server with Embedded PSC

- vCenter Server with External PSC

- PSC in Replicated Configuration

- PSC in HA Configuration

- vCenter Server Deployment Across Sites

- vCenter Server Deployment Across Sites with Load Balancer

Vmware have provided a handy decision tree for those yet to make their deployment decision, shout out to @Emad_younis & @eck79 [ref9]

vsphere_topology_decision_tree_poster-v5_0804016

PSCs are multi-master, but the default replication topology for the PSC is one to one, in a scenario where more than 2 PSCs exist within a vSphere domain, it is a recommendation to use a ring topology. This prevents a break in replication when a PSC fails.

Jeff Green in his virtual data cave has a good blog post below, which is relevant for 6.0 & 6.5

https://virtualdatacave.com/2017/02/vsphere-6-0-psc-replication-ring-topology/

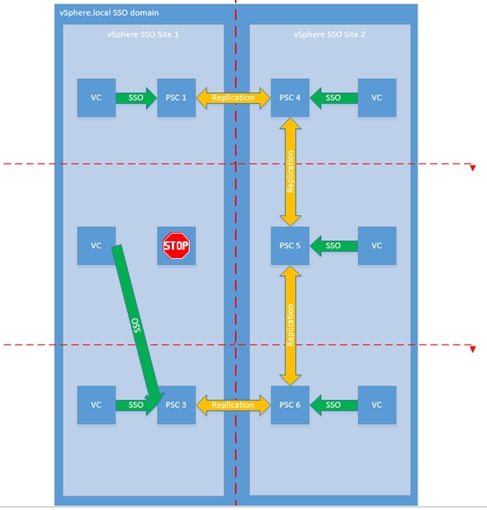

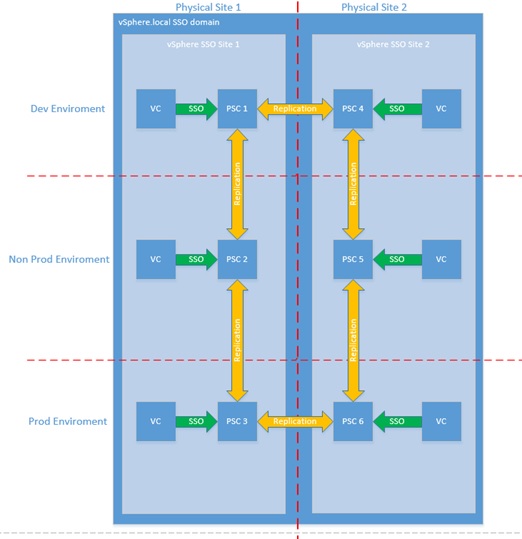

expanding a little, In my example below, we’ve 1 vSphere (SSO) Domain, 2 sites and 3 environments Prod, Non Prod & Development. Each environment has physical infrastructure at both sites for BCDR purposes, each physical piece of infrastructure has a vCenter and a PSC. In this example, a ring topology would look like below, 2 PSC controllers at the same site would need to fail in order to break replication.

Where PSC partner replication occurs over the WAN from site 1 to site 2 it would add further resilience if replication partners PSC1 & PSC4 were on a different circuit to PSC3 & PSC6, this would ensure replication continues in the event of WAN circuit failure.

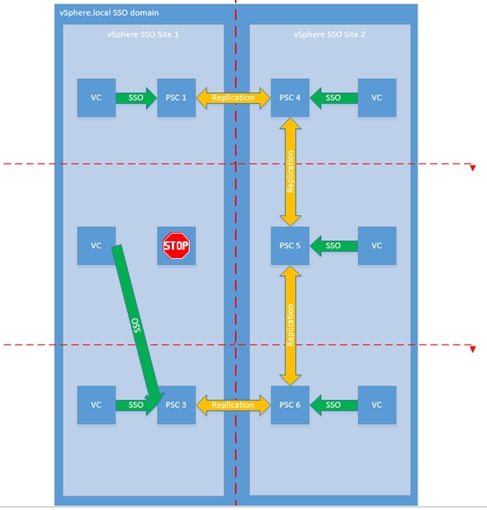

For example, if PSC2 fails, the VC can be repointed to either PSC1 or PSC3 within the same site while PSC2 is re-deployed

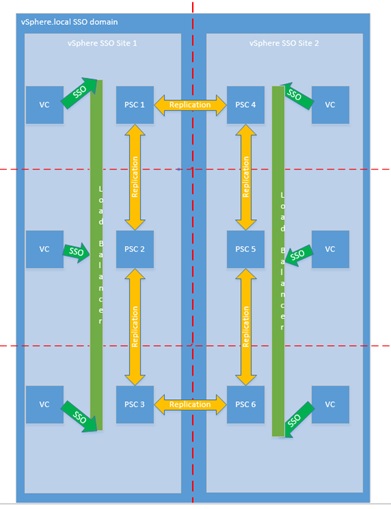

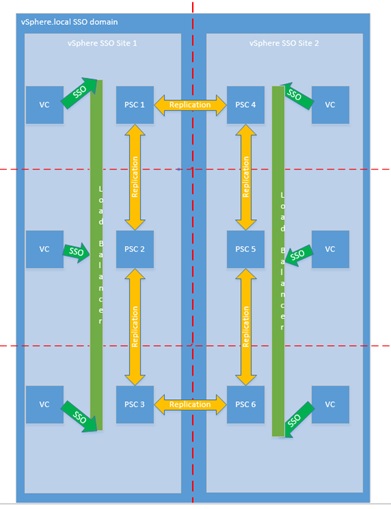

In addition, you could introduce load balancers into the environment and have all 3 PSCs behind the load balancer, this would prevent the manual re-pointing of a VC in the event of PSC failure. This design would be more suited to environments that would require a highly available VC.

If each environment was it’s own active directory domain, you could configure an identity source for each domain too (see below for more details) keeping all VCs in the same vSphere domain and minimising vSphere administration but simultaneously restricting access to environments by specific AD domain credentials.

Musing/Question – If both cross site partners use the same circuit, what happens if the cross site PSC replication can’t occur? All PSCs will be up and replicating intra-site but not cross-site. Will a split brain situation occur, if contradicting configurations are implemented at either site, what will take preference? I plan on creating this environment and testing; I’ll provide the results in a future blog and update this blog for reference.

PSC Replication Topology

- It now handles the storing and generation of the SSL certificates within your vSphere environment.

- It now handles the storing and replication of your VMware License Keys

- It now handles the storing and replication of your permissions via the Global Permissions layer.

- It now handles the storing and replication of your Tags and Categories.

Active Directory Trusts

Another good reference is what Microsoft Active Directory Trusts are supported and be used in vSphere SSO when using AD as an identity source [ref10]

All PSCs should be joined to Active Directory; PSCs in the same vSphere domain can be added to different Active Directory domains PROVIDING there is a trust relationship between the active directory domains. This will be relevant if you have a child Active directory domain for different GEO locations EG EMEA/AMER/APAC or environments EG prod/non prod/Dev and a different vSphere site within the same vSphere domain for each of those locations.

PSC ports

The required ports for PSC communication are listed in the following references, if you have firewalls between PSC nodes or between PSCs & vCenters then these ports will need to be opened [ref 10] [ref11]

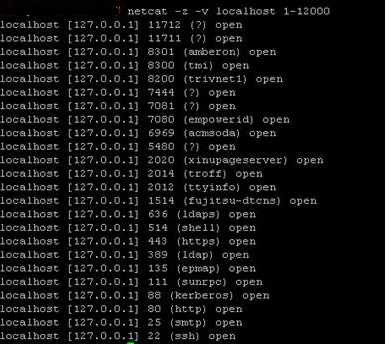

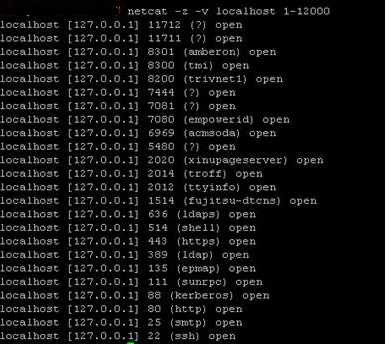

The following ports are listening on a VC with embedded PSC and on a standalone external PSC

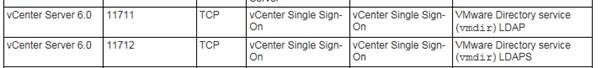

PSC replication occurs over TCP ports 389 & 2012 & UDP 389 according to [ref11]

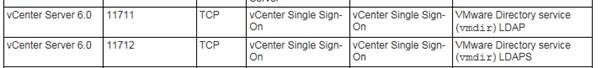

NOTE: In a real world environment I have seen VCs that have been upgraded from 5.5 to 6 with embedded PSCs replicating to each other over a WAN on 11712 & 11711 (with a firewall in-between blocking 2012)

[Ref11] shows ports 11712 & 11711 as legacy and for 5.5 backwards compatibility only however I did find a reference here that lists 11711 & 11712 as vmdir.

https://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=1012382

It may be the case that as it was previously using 11711 & 11712 for replication, it’s continued to use these ports after an upgrade?

When migrating from the embedded PSC in VC 6 (updated from 5.5) to newly deployed external PSC, replication only occurred over TCP ports 389 & 2012 & UDP 389 between external PSCs

Migrating a vCenter from an embedded PSC to an external PSC, the considerations?

With a plain vanilla install of vSphere, where you’re only concerned with migrating to an external PSC the process is relatively straight forward.

The process becomes a little more intricate when other VMware solutions are registered against the embedded PSC, these solutions will need to be repointed to the new external PSC.

The most common solutions likely configured to use a PSC are vRO, SRM, NSX & vRA, 3rd party backup tools that plug into the vCenter may also have a separate configuration for the PSC. In a scenario where you want to De-Comm the embedded PSC, these solutions will need to be repointed to the external PSC. In my experience, the PSC that these solutions point to has to be the same PSC that the VC points to.

********Update 01.09.2017********

So at the Vegas 2017 VMworld, there was some good discussions around the PSC that’s definitely worth a watch!!

//players.brightcove.net/1534342432001/Byh3doRJx_default/index.html?videoId=5559767662001

You can use the following command to re-point a VC from 1 external PSC to another external PSC

cmsso-util repoint –repoint-psc externalPSC –username administrator –domain-name vsphere.local –passwd password

However when migrating away from an Embedded PSC, you want to use the following command which demotes the embedded PSC and then repoints to the external PSC.

cmsso-util reconfigure –repoint-psc externalPSC –username administrator –domain-name vsphere.local –passwd password

Troubleshooting and commands

The following commands can be used to check and test the PSC replication

- Find out what vSphere site a PSC is ink

/usr/lib/vmware-vmafd/bin/vmafd-cli get-site-name –server-name localhost

C:\Program Files\VMware\vCenter Server\vmafdd\vmafd-cli get-site-name –server-name localhost

- Find out the name of the vsphere domain

/usr/lib/vmware-vmafd/bin/vmafd-cli get-domain –server-name localhost

C:\Program Files\VMware\vCenter Server\vmafdd\vmafd-cli get-domain –server-name localhost

- Find out what PSC a vCenter is point to

/usr/lib/vmware-vmafd/bin/vmafd-cli get-ls-location –server -name localhost

C:\Program Files\VMware\vCenter Server\vmafdd\vmafd-cli get-ls-location –server -name localhost

- Show all Platform Services Controllers in the vsphere domain

/usr/lib/vmware-vmdir/bin/vdcrepadmin -f showservers -h localhost -u administrator -w %password%

“%VMWARE_CIS_HOME%”\vmdird\vdcrepadmin -f showservers -h localhost -u administrator -w %password%

- Show replication partners with particular PSC

/usr/lib/vmware-vmdir/bin/vdcrepadmin -f showpartners -h localhost -u administrator -w %password%

“%VMWARE_CIS_HOME%”\vmdird\vdcrepadmin -f showpartners -h localhost -u administrator -w %password%

- Show replication partner status

/usr/lib/vmware-vmdir/bin/vdcrepadmin -f showpartnerstatus -h localhost -u administrator -w %password%

“%VMWARE_CIS_HOME%”\vmdird\vdcrepadmin -f showpartnerstatus -h localhost -u administrator -w %password%

- Create a PSC replication agreement

/usr/lib/vmware-vmdir/bin/vdcrepadmin -f createagreement -2 -h sourcepscfqdn -H destinationpscfqdn -u administrator -w %password%

“%VMWARE_CIS_HOME%”\vmdird\vdcrepadmin -f createagreement -2 -h sourcepscfqdn -H destinationpscfqdn -u Administrator -w %password%

- Remove PSC replication agreement

/usr/lib/vmware-vmdir/bin/vdcrepadmin -f removeagreement -2 -h sourcepscfqdn -H destinationpscfqdn -u administrator -w %password%

“%VMWARE_CIS_HOME%”\vmdird\vdcrepadmin -f removeagreement -2 -h sourcepscfqdn -H destinationpscfqdn -u Administrator -w %password%

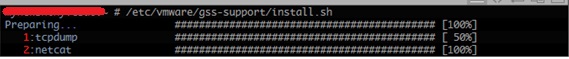

If you’re having communication/replication problems with the PSC, perhaps you have firewalls in your environment then you can use the following tools to test port connectivity.

Curl is available for telnet using the following command [ref12]

Curl –v telnet://mypsc.domain.local:443

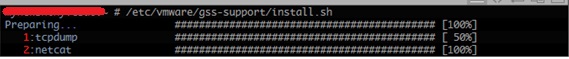

You can install tcpdump and netcat on the VCSA using the following commands

/etc/vmware/gss-support/install.sh

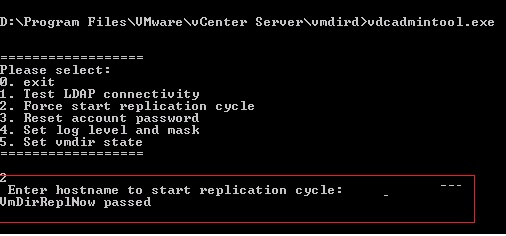

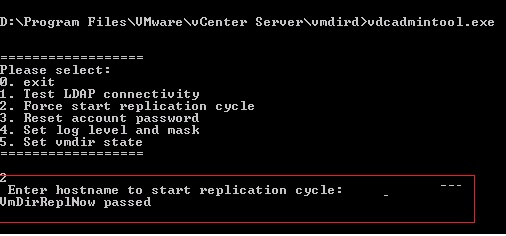

You can use VDC Admin Tool to test LDAP connectivity, force replication plus more…

You can find the tool here

VCSA

/usr/lib/vmware-vmdir/bin/vdcadmintool

Windows

“%VMWARE_CIS_HOME%”\vmdird\vdcadmintool.exe

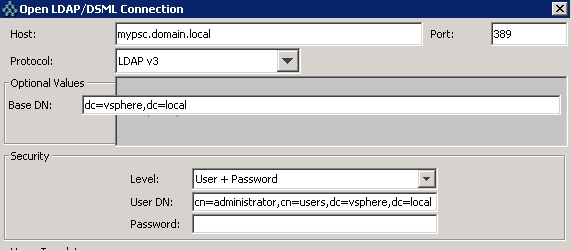

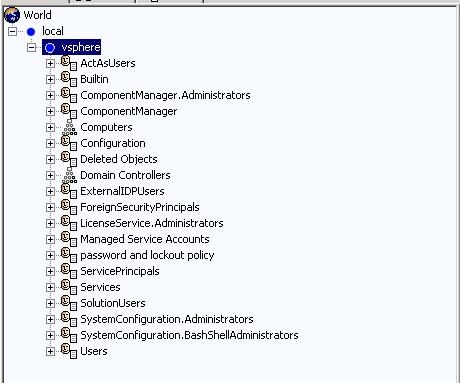

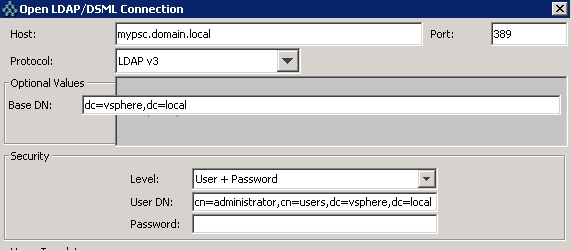

You can use JXplorer to browse LDAP using the following settings.

If you can’t WINSCP into the VCSA you’ll need to change the root shell.

chsh -s /bin/bash root

You can change the shell back by running

Chsh –s /bin/appliancesh root

If you want to remove a PSC from the environment because it has failed you can use

cmsso-util unregister –node-pnid PSCNAME.LOCAL.DOMAIN –username administrator@vsphere.local –passwd %password%

You can also use

vdcleavefed -h PSCNAME.LOCAL.DOMAIN -u administrator -w %password%

The password for the root account of the VCSA expires after 365 days by default to set to infinity

chage -M -1 -E -1 root

To change the password at the CLI type

passwd

Then confirm your new password.

You can find PSC relevant logs in the following locations

VCSA

/var/log/vmware/vmdird/vmdird-syslog.log

/var/log/vmare/vmdir/vdcrepadmin.log

Windows

C:\ProgramData\VMware\vCenterServer\logs\vmdird

List of references by link

[ref1]

https://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=2113115

[ref2]

https://pubs.vmware.com/vsphere-65/index.jsp?topic=%2Fcom.vmware.vsphere.install.doc%2FGUID-E7DFB362-1875-4BCF-AB84-4F21408F87A6.html

[ref3]

https://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=2113917

[ref4]

https://kb.vmware.com/selfservice/search.do?cmd=displayKC&docType=kc&docTypeID=DT_KB_1_1&externalId=2131191

[ref5]

http://pubs.vmware.com/vsphere-60/index.jsp?topic=%2Fcom.vmware.vsphere.install.doc%2FGUID-7223A0A9-9E3C-4093-8121-9BD8B3DB793F.html

[ref6]

https://kb.vmware.com/selfservice/search.do?cmd=displayKC&docType=kc&docTypeID=DT_KB_1_1&externalId=2086001

[ref7]

https://www.vmware.com/pdf/vsphere6/r60/vsphere-60-configuration-maximums.pdf

[ref8]

https://www.vmware.com/pdf/vsphere6/r65/vsphere-65-configuration-maximums.pdf#page=19

[ref9]

https://blogs.vmware.com/vsphere/2016/04/platform-services-controller-topology-decision-tree.html

[ref10]

https://kb.vmware.com/selfservice/microsites/search.do?language=en_US&cmd=displayKC&externalId=2064250

[ref11]

https://pubs.vmware.com/vsphere-60/index.jsp?topic=%2Fcom.vmware.vsphere.upgrade.doc%2FGUID-925370DD-E3D1-455B-81C7-CB28AAF20617.html

[ref12]